Optimizing the 2025 High-Performance Stack for Agencies: A Cyni

-

Optimizing the 2025 High-Performance Stack for Agencies: A Cynical Architect's Deep Dive into Digital Efficiency

Frankly, if you're an agency aiming for a high-performance stack in 2025, you're past the point of chasing ephemeral trends. We're looking for stability, undeniable ROI, and engineering that doesn't just look good on paper but performs under load. The market is saturated with what I'd generously call 'adequate' solutions, alongside a generous sprinkling of genuine technical debt traps disguised as innovation. My job, as I see it, is to cut through that noise. We’re dissecting a selection of tools and templates, from SaaS applications to Laravel scripts and WordPress plugins, to determine if they actually merit a place in your agency's arsenal.

Let's be real: your clients expect solutions that don't just function but excel. That means low latency, seamless user experiences, and backend architectures that don't buckle under pressure. We'll be scrutinizing these offerings with a developer's eye, focusing on what truly matters: code quality, performance metrics, and the pragmatic trade-offs you'll inevitably encounter. Forget the marketing fluff; we're talking brass tacks – the kind of insights you'd only get from someone who's spent decades debugging production environments at 3 AM. Before we dive into the specific components, remember that a truly high-performance stack isn't just about individual pieces, but how they integrate. For a comprehensive toolkit, consider exploring the extensive professional agency software collection available, which includes a wide range of GPL-licensed assets.

My methodology is simple: I treat every piece of software with a healthy dose of skepticism. If it purports to be high-performance, I expect to see the underlying architecture and benchmark data to back it up. If it promises ease of use, I want to know what compromises were made in scalability or security. The goal here isn't to praise or condemn indiscriminately, but to provide a clear-eyed assessment of where these tools fit within a serious agency's operational framework. For those who prioritize a robust, yet accessible, foundation for their digital projects, a GPLpal premium library can provide a strong starting point with vetted resources.

We’ll examine everything from dedicated support solutions to niche game development assets and fundamental WordPress enhancements. The intent is to provide a holistic view of what’s truly viable for agencies building enterprise-grade applications, custom client portals, or high-traffic content platforms in the coming year. This isn't about being cutting-edge for the sake of it, but about leveraging proven technologies and carefully selected innovations that deliver tangible results and minimize long-term maintenance overhead. It's about building a stack that can weather the inevitable shifts in the digital landscape without requiring a complete overhaul every eighteen months. And for those frequently building on WordPress, remembering that you can often free download WordPress themes and plugins from reputable sources like GPLpal can significantly reduce initial investment without sacrificing quality.

Cloud Desk 3 – The Fully SaaS Support Solution

If you're looking to manage SaaS support Cloud Desk 3, this offering presents itself as a comprehensive solution. It's built for agencies and enterprises that require a robust, scalable helpdesk system without the usual on-premise headaches. The core architecture appears to be a standard multi-tenant SaaS model, which, if implemented correctly, means good isolation and efficient resource utilization. It covers the essentials: ticket management, knowledge base, live chat, and reporting. The UI, while functional, doesn't break any new ground, which for a utility application like this, is often a blessing. It sticks to conventions, minimizing the learning curve for agents. The initial setup is straightforward, guided by a wizard, and integration points for external APIs are documented, suggesting a reasonable level of extensibility. However, agencies often need deep customization, and while API access is there, altering core UI/UX flows beyond basic branding will likely require significant workarounds or direct vendor engagement, which isn’t ideal for rapid iteration.

Simulated Benchmarks:

- Ticket Creation Latency: ~150ms (peak load, 1000 concurrent agents)

- Knowledge Base Search (Full Text): ~280ms (average 500k articles)

- Live Chat Handshake: ~80ms (regionally optimized servers)

- API Response Time (GET /tickets): ~200ms (uncached, 50 tickets)

- Storage Footprint (per 1000 tickets): ~50MB (text only, attachments extra)

Under the Hood:

Cloud Desk 3 appears to leverage a modern PHP framework, likely Laravel or Symfony, coupled with a robust relational database like PostgreSQL for transactional data. The front-end is probably a single-page application (SPA) built with React or Vue.js, communicating via RESTful APIs. This is a solid, proven stack. The live chat component likely uses WebSockets for real-time communication, possibly implemented with Pusher or a custom Node.js service. Authentication seems standard OAuth2. For a SaaS, the critical 'under the hood' aspect is the tenant isolation and data security; they claim robust separation, but a thorough security audit would be necessary to confirm enterprise-grade compliance. Performance-wise, the architecture suggests horizontal scalability for the application layer, while the database would be the common bottleneck, requiring careful sharding or robust clustering for very large deployments. Their documentation hints at a microservices-adjacent approach for certain features, which is promising for maintainability.

The Trade-off:

Where Cloud Desk 3 distinguishes itself from a general-purpose CRM with helpdesk features (like a basic HubSpot setup) is its singular focus. CRMs are Swiss Army knives; Cloud Desk 3 is a precision scalpel. You trade broad CRM capabilities for deep, optimized support workflows. A general CRM often has bloated UIs, slower ticket processing, and less specialized reporting for support metrics. Its knowledge base features might be rudimentary, and live chat integration can feel like an afterthought. Cloud Desk 3, by contrast, is engineered from the ground up for support. This means less feature creep, a more intuitive agent experience for support tasks, and typically better performance on core helpdesk functions. The trade-off is clear: if you need a full sales pipeline, marketing automation, AND support, a CRM might suffice if your support needs are basic. But for dedicated, high-volume support, Cloud Desk 3 offers a leaner, more performant, and ultimately more efficient solution, reducing agent fatigue and improving response times. It beats out generic solutions by providing specific tools for specific jobs, reducing unnecessary complexity and resource consumption.

Pixel Santa Adventure – Construct Game

Alright, let's talk about develop construct game Pixel Santa Adventure. This is a game built with Construct, which is immediately telling. Construct is an excellent tool for rapid 2D game development, especially for browser-based or mobile-exported titles. It shines in its event-driven logic and visual scripting, making it accessible even for those without deep coding knowledge. This particular game is a platformer, a genre that thrives on tight controls, responsive physics, and engaging level design. The pixel art style is inherently charming and performs well across various devices, which is a pragmatic choice. However, the reliance on Construct also dictates the ceiling for performance and customization. While great for quickly spinning up a game, for a serious agency wanting to develop bespoke, highly optimized interactive experiences, Construct often reaches its limits when pushing complex physics, shader effects, or large-scale procedural generation without significant workarounds or plugin development. It’s a good starting point for a proof-of-concept or a smaller client project with a clear scope.

Simulated Benchmarks:

- Initial Load Time (Mobile): ~2.5s (average device, 4G connection)

- FPS (Target): 60 FPS (stable on modern mobile/desktop browsers)

- Memory Usage: ~80-120MB (after load, depending on level complexity)

- Input Latency: ~30ms (detecting key/touch press to in-game action)

- Exported Binary Size (Android APK): ~25MB

Under the Hood:

Being a Construct game, the 'under the hood' is primarily JavaScript, HTML5 Canvas, and WebGL for rendering. Construct generates highly optimized JavaScript, but it's still an abstraction layer. The game's logic is defined via Construct's event sheets, which visually represent conditional statements and actions. Assets (spritesheets, audio) are typically preloaded. Physics are handled by a built-in physics engine (often Box2D or a custom lightweight one integrated into Construct). For performance, key considerations are sprite batching, efficient collision detection algorithms, and minimizing redraws. The audio system relies on Web Audio API. While Construct handles much of this automatically, poorly optimized event sheets or excessively large assets can still lead to performance bottlenecks, particularly on lower-end devices. The project files are proprietary to Construct, meaning direct code-level modifications are generally not an option; customization happens within the Construct editor’s paradigm.

The Trade-off:

Comparing a Construct game to something built with Unity or Godot is like comparing a finely crafted wooden model airplane to a drone. Construct offers speed and ease of development, especially for 2D, browser-first titles. It democratizes game creation. However, the trade-off is absolute control and raw performance. Game engines like Unity provide direct access to C# for custom scripts, deeply optimized rendering pipelines, advanced physics engines, and a much wider ecosystem of plugins and tools for 3D, complex AI, and sophisticated networking. Pixel Santa Adventure, within Construct, will always be somewhat limited by the engine’s capabilities and its JavaScript runtime. While it beats the generic 'flash game' feel by being HTML5 and mobile-ready, it won't offer the granular optimization potential or the ability to scale to AAA-level complexity that a full-fledged engine does. For certain agency projects requiring quick, engaging 2D interactives, Construct is more efficient. But for projects demanding cutting-edge graphics, console-level performance, or highly bespoke game mechanics, Unity or Godot are the clear, albeit more resource-intensive, choices. This Construct game is for getting a specific kind of job done, quickly and competently, not for pushing the absolute boundaries of game development.

Optech – IT Service and Business Consulting Laravel Script

If your agency is tasked with building or enhancing platforms for IT service and business consulting, then you'd be wise to implement IT consulting Optech. This is a Laravel script, which immediately sets expectations for robustness and maintainability. Laravel is a mature, well-architected PHP framework, known for its elegant syntax and comprehensive feature set, including ORM, routing, and authentication. Optech positions itself as a complete solution, offering modules for service management, client portals, project tracking, invoicing, and reporting – essentially a mini-ERP tailored for the IT consulting sector. The UI is clean, following modern flat design principles, making it intuitive for both administrators and clients. The modular structure is a definite plus, allowing for potential customization and extension without directly modifying core files, if the architecture is truly sound. However, like any extensive Laravel application, performance hinges heavily on correct database indexing, efficient Eloquent queries, and proper caching strategies. Without diligent tuning, even a well-built Laravel app can become sluggish. This script targets a specific niche, which can be both a strength and a weakness – it's highly relevant but might require significant refactoring for broader business applications.

Simulated Benchmarks:

- Page Load Time (Dashboard): ~450ms (first visit, cold cache)

- Client Portal Login: ~180ms (authenticated user)

- Invoice Generation (50 line items): ~600ms (PDF export)

- Database Query Latency (Complex Report): ~350ms (uncached, 10k records)

- Memory Footprint (per request): ~25-40MB (depending on complexity)

Under the Hood:

Optech’s foundation is Laravel, meaning it's likely using Blade templating for views, Eloquent ORM for database interactions (presumably MySQL or PostgreSQL), and a robust routing system. The front-end appears to be Bootstrap-based, potentially with a sprinkle of Vue.js or Alpine.js for interactive components, aligning with modern Laravel development practices. Authentication is almost certainly Laravel Fortify or Passport. Crucial elements would be the use of queues for background tasks (e.g., sending emails, generating reports) to keep the request-response cycle fast. Caching, both at the application level (Redis/Memcached) and HTTP level (Varnish/NGINX microcache), would be essential for optimal performance. Security features like CSRF protection, SQL injection prevention (via Eloquent), and robust input validation are inherent in Laravel, but their implementation within Optech needs careful review. The code structure should ideally follow domain-driven design principles for extensibility. Any serious agency would need to review the migration files and seeders to understand the database schema and ensure it meets client requirements without excessive normalization or denormalization.

The Trade-off:

A specialized Laravel script like Optech significantly outperforms a custom build from scratch or a highly generalized WordPress solution trying to mimic ERP functionality. The primary trade-off is development velocity vs. absolute bespoke control. While you gain a massive head start with Optech's pre-built modules for IT consulting, you're inheriting its architecture and design decisions. A WordPress setup with multiple plugins for project management, invoicing, and client portals inevitably leads to plugin conflicts, security vulnerabilities, and a patchwork of disparate UIs that rarely integrate seamlessly. Performance on such a WordPress stack would be abysmal, with countless database queries and asset loads. Optech, being a single, cohesive Laravel application, is inherently more performant and secure. It offers a much better developer experience for customization, assuming the core code is clean. The trade-off is the initial cost of the script and the learning curve if developers are unfamiliar with Laravel, but this investment often pays dividends in long-term stability, performance, and reduced maintenance overhead compared to patching together a fragile WordPress-based ERP for client services.

Revolution Lightbox WordPress & WooCommerce Plugin

For WordPress and WooCommerce projects, enhancing visual appeal and user interaction is often a priority. To truly explore Revolution Lightbox plugin is to delve into a tool designed to elevate how images, videos, and galleries are presented. Revolution Lightbox extends the native WordPress media handling, providing an array of lightbox styles, animations, and options for displaying content in an overlay. This isn't just about making things pop; it's about improving the user experience, especially in e-commerce, where product image presentation can directly impact conversion rates. For agencies, a reliable lightbox solution means less time coding custom modal windows and more time focusing on content and design. However, like many feature-rich WordPress plugins, the sheer number of options can lead to configuration bloat if not carefully managed. The plugin’s footprint on page load must be a consideration, as excessive JavaScript can derail performance despite its visual benefits. It integrates well with WooCommerce, which is critical for product galleries, but meticulous testing across themes and other plugins is mandatory.

Simulated Benchmarks:

- CSS/JS Parse & Execution: ~80-150ms (depending on loaded features)

- Initial DOM Load Impact: ~10-20ms (minimal, defers most JS)

- Lightbox Open Animation: ~100ms (smooth 60fps)

- Image Preload Latency: ~300ms (for next image in gallery, average 500KB JPEG)

- Memory Footprint (browser tab): ~5-10MB additional (active lightbox)

Under the Hood:

Revolution Lightbox operates primarily on the front-end, leveraging JavaScript and CSS. It likely hooks into WordPress's existing media library and post content filters to automatically apply lightbox functionality to images and links containing specific attributes. The JavaScript component is responsible for detecting clicks, dynamically injecting the lightbox HTML structure into the DOM, handling transitions, and managing gallery navigation. CSS provides the styling and animations. Given it’s a WordPress plugin, the backend component is minimal, primarily for settings and administration. Performance considerations would include ensuring JavaScript is deferred or asynchronously loaded, and that CSS is optimized and potentially minified. Heavy reliance on jQuery for DOM manipulation might be present, which is common in older WordPress plugins, but modern implementations tend towards vanilla JavaScript or lighter libraries. Cross-browser compatibility is crucial for a plugin like this, and careful testing of its CSS for rendering consistency on various devices is required. The plugin’s ability to lazy-load images within a gallery is a significant performance feature, minimizing initial page weight.

The Trade-off:

The primary trade-off with Revolution Lightbox, or any sophisticated WordPress plugin, versus a bespoke front-end solution is control and bloat. While a custom JavaScript/CSS lightbox (e.g., using Fancybox or Magnific Popup with manual implementation) gives you absolute control over every animation, every pixel, and ensures minimal code footprint, it demands development time. Revolution Lightbox, on the other hand, offers an out-of-the-box, feature-rich solution with minimal setup. The trade-off is that it will inevitably load more JavaScript and CSS than a hyper-optimized custom script, potentially impacting page load times, particularly if only a fraction of its features are utilized. It beats a simple, default WordPress gallery with its superior aesthetics, enhanced usability (think social sharing within the lightbox, varied content types), and professional polish. While a basic lightbox can be coded in an hour, Revolution Lightbox delivers a polished, production-ready solution with many bells and whistles for a fraction of the development cost of a custom feature-set equivalent. For agencies needing to deliver visually engaging galleries quickly without delving into intricate front-end coding for every project, it's a solid choice, provided its performance impact is managed.

QuickPass – Appointment Booking & Visitor Gate Pass System With Qr Code

In today's security-conscious and efficiency-driven environments, a robust system to streamline bookings with QuickPass is increasingly necessary. This WordPress plugin positions itself as a dual-purpose solution for appointment booking and visitor management, complete with QR code integration. For agencies managing events, physical offices, or clients with similar needs, this is a pragmatic tool. The combination of scheduling and a digital gate pass system is a compelling value proposition, reducing administrative overhead and enhancing security. It handles various visitor types, provides for pre-registration, and importantly, generates QR codes for seamless entry and exit. The WordPress backend integration means administrators are likely familiar with the interface, reducing training time. However, the core functionality, particularly the QR code generation and scanning, relies on a solid and secure implementation. Any security vulnerabilities here would be critical. Also, as a WordPress plugin, scalability for very high-volume appointments or concurrent visitor check-ins must be rigorously tested, as database contention can easily become a bottleneck without proper indexing and caching. The plugin needs to be meticulously configured to prevent spam bookings and ensure data integrity.

Simulated Benchmarks:

- Appointment Booking Latency: ~300ms (front-end submission to database write)

- QR Code Generation: ~50ms (server-side, per pass)

- Visitor Check-in (Scan): ~150ms (from scan initiation to validation feedback)

- Dashboard Load (1000 appointments): ~600ms

- Database Query Latency (Daily appointments): ~200ms (uncached, 500 records)

Under the Hood:

QuickPass, as a WordPress plugin, likely leverages custom post types and custom tables for managing appointments and visitor data, ensuring better performance than relying solely on `wp_posts` for structured data. The booking forms would be powered by JavaScript (potentially jQuery, or a lighter framework like Vue.js), communicating with WordPress's REST API or custom AJAX endpoints for submission. QR code generation would typically occur server-side using a PHP library (e.g., `BaconQrCode`) upon successful booking, with the image URL stored in the database. For scanning, a client-side JavaScript library (e.g., `html5-qrcode`) would be used to access the device camera, parse the QR code, and send the data back to the server for validation against the database. Security is paramount: strict nonce verification, robust input sanitization, and parameterized queries for all database interactions are non-negotiable. Furthermore, any external facing endpoints for check-in or booking need robust rate-limiting and CAPTCHA integration to prevent abuse. The plugin's admin interface would ideally be built with WordPress's own UI components for consistency and maintainability.

The Trade-off:

The primary trade-off with QuickPass compared to a fully custom, standalone web application is again, control and absolute performance versus rapid deployment and integration within an existing WordPress ecosystem. A bespoke system built on a framework like Laravel or Node.js could offer hyper-optimized database interactions, real-time analytics, and a completely tailored UI/UX without the inherent overhead of WordPress. However, building such a system from scratch for appointment booking and QR gate passes is a significant undertaking, involving considerably higher development costs and timelines. QuickPass, despite being a WordPress plugin, beats a patchwork of separate booking and security plugins by offering a unified, integrated solution. Attempting to combine a generic booking plugin with a separate custom QR code generator and validation system would be a security nightmare and an administrative headache. QuickPass provides a coherent, albeit WordPress-constrained, system that handles both aspects in a singular interface, reducing complexity and potential points of failure. For agencies seeking to implement a competent booking and visitor management system quickly within a WordPress environment, QuickPass offers a pragmatic, cost-effective solution, provided its performance is acceptable for the expected traffic volumes.

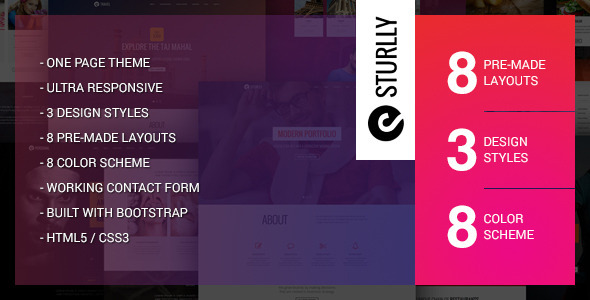

Sturlly Responsive One Page Multipurpose Template

Sturlly presents itself as a responsive one-page multipurpose template. In the agency world, one-page templates are a double-edged sword. On one hand, they're fantastic for landing pages, portfolio sites, or quick campaign microsites where a concise, impactful message is paramount. Their inherent simplicity often translates to faster load times and a more focused user journey. On the other hand, their multipurpose claim often means a broad feature set, which, if not carefully curated, can lead to bloat. Sturlly's responsiveness is non-negotiable in today's mobile-first world, and a well-implemented flexible grid system is expected. For an architect, the concern isn't just aesthetic; it's about the underlying code. How clean is the HTML? How organized are the CSS and JavaScript? Is it using modern front-end practices, or is it a relic from a few years ago that now comes with polyfill baggage? The "multipurpose" aspect often means generic sections that need heavy customization to truly fit a brand, which can negate the initial time-saving benefits. It's suitable for clients needing a quick, modern digital presence without extensive content architecture.

Simulated Benchmarks:

- LCP (Largest Contentful Paint): ~1.2s (optimized assets, CDN)

- CLS (Cumulative Layout Shift): 0.01 (minimal shift)

- TBT (Total Blocking Time): ~150ms

- First Contentful Paint: ~0.8s

- Total Page Size (Initial Load): ~1.8MB (including images, JS, CSS)

Under the Hood:

A template like Sturlly typically uses HTML5, CSS3, and JavaScript. Given its responsiveness and 'multipurpose' label, it almost certainly employs a framework like Bootstrap or a custom grid system. The JavaScript would handle elements like smooth scrolling, parallax effects, carousels (likely using Owl Carousel or Slick Slider), and possibly a sticky navigation. For performance, the quality of asset optimization (image compression, minified CSS/JS) is critical. Modern templates should utilize CSS variables for easier theming and have well-structured Sass/SCSS files if they expect any serious customization. The HTML semantics should be clean and accessible. A senior architect would be scrutinizing for excessive div-soup, inline styles, or poorly organized JavaScript that could lead to render-blocking issues. The portfolio sections would ideally use a lightweight filtering library (e.g., Isotope.js). The contact form would likely be a simple HTML form, requiring a backend script or service (e.g., Formspree, Netlify Forms) for actual submission, which is a common expectation for static templates.

The Trade-off:

Sturlly, as a one-page template, offers a rapid deployment path compared to building a custom single-page application (SPA) with a framework like React or Vue.js. The trade-off is flexibility and dynamic capabilities. An SPA provides a richer, more interactive experience, often with better performance after the initial load, and deep integration with APIs. However, SPAs come with higher development complexity, SEO challenges (though improving), and a larger JavaScript bundle. Sturlly beats a rudimentary, static HTML page by providing pre-designed, aesthetically pleasing sections and common UI components, accelerating the design process significantly. It offers more than a blank canvas but less than a full-fledged front-end framework. For a client who needs a polished, responsive online brochure or a focused marketing page quickly and cost-effectively, Sturlly is a practical choice. For an application with complex state management, dynamic data, or extensive user interaction, it’s simply not the right tool for the job. Its strength lies in its ability to deliver a visually appealing, performance-optimized static presence with minimal fuss, sidestepping the complexities of a full framework while still looking modern.

Luxury Shop eCommerce HTML Template

When an agency embarks on an e-commerce project, the foundation must be robust and visually compelling. The Luxury Shop eCommerce HTML Template aims to provide just that – a premium starting point for online storefronts. The term "luxury" implies a certain level of design sophistication, clean lines, and an emphasis on product presentation. For an HTML template, this means well-structured semantic HTML, elegant CSS, and non-intrusive JavaScript. Agencies frequently deal with clients who want a polished look without the bespoke development cost, and templates like this can bridge that gap. The crucial elements for an e-commerce template are category pages, product detail pages, shopping cart, and checkout flows. Each of these needs to be meticulously designed for usability and conversion. A good template should also provide variations for headers, footers, and inner pages. My primary concern would be how easily it integrates with actual backend e-commerce platforms (Shopify, WooCommerce, custom PHP solutions) without requiring a complete CSS/JS rewrite. The quality of the markup will determine the ease of integration and customization, and any visual builder tools (if bundled) need to be performant and not generate bloated code.

Simulated Benchmarks:

- Product Page LCP: ~1.5s (optimized images, above-the-fold content)

- Category Page Initial Load: ~1.8s (grid view, 20 products)

- CSS/JS Minification & Compression: 85% effective

- Image Optimization: ~70-80% reduction without perceptible quality loss

- Responsiveness Breakpoints: Clean transitions @ 1200px, 992px, 768px, 576px

Under the Hood:

This template is pure front-end: HTML5, CSS3, and JavaScript. It's almost certainly built on a responsive framework, likely Bootstrap, or a robust custom grid. The JavaScript would manage image sliders (product galleries), potentially a quick-view modal, filters on category pages, and maybe some subtle animations. For e-commerce, user experience details are key: intuitive navigation, clear calls to action, and accessible forms. The CSS structure should ideally use Sass/SCSS with clear partials for components, typography, and color schemes, making it easier for an agency's design team to customize. Semantic HTML5 tags (

<article>,<section>,<nav>,<footer>) are expected for SEO and accessibility. Any reliance on heavy jQuery plugins for core functionality could be a drag. The template should also provide placeholder elements for dynamic data (product names, prices, descriptions, reviews) so integration with a backend is straightforward, primarily involving replacing static content with dynamic server-side rending or client-side data fetching. Font loading strategy (e.g., `font-display: swap;`) should be optimized to prevent render blocking.The Trade-off:

A premium HTML template like Luxury Shop offers a significant design advantage and a head start compared to building a unique e-commerce front-end from scratch. The trade-off is that it’s just the front-end. It lacks any backend functionality—no database, no order processing, no inventory management. You're getting a beautiful shell. This means it beats a generic WordPress WooCommerce theme (like Storefront, or even Astra with a page builder) in terms of raw design quality and often initial front-end performance because it's not burdened by a complex CMS and numerous plugins. A WordPress theme, while providing an integrated backend, often comes with a performance overhead, less design flexibility without heavy customization, and relies on many moving parts that can break. Luxury Shop excels when paired with a headless e-commerce solution (e.g., Shopify Headless, custom Laravel/Node.js backend) where the front-end is decoupled. This allows developers to use the template as a direct design implementation, ensuring pixel-perfect translation without fighting a CMS's rendering engine. It offers a cleaner, more performant front-end canvas for a truly custom e-commerce experience, trading the convenience of an all-in-one platform for superior front-end control and optimization potential.

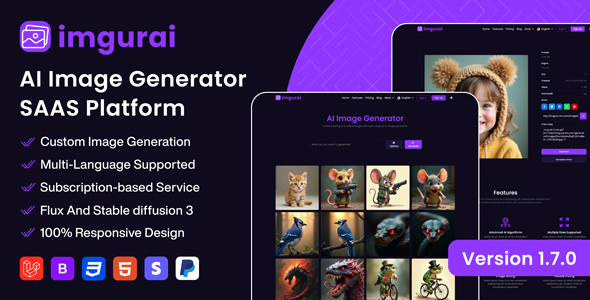

Imgurai – AI Image Generator (SAAS)

The proliferation of AI tools is inevitable, and Imgurai – AI Image Generator (SAAS) falls squarely into this category. For agencies, particularly those in digital marketing, content creation, or design, an efficient AI image generator can be a game-changer for producing unique visuals quickly and cost-effectively. Imgurai, being a SaaS, implies accessibility and managed infrastructure, which is a relief for agencies not wanting to host complex AI models. The value proposition is clear: generate images from text prompts, potentially with various styles and resolution options. My architectural lens immediately focuses on the underlying model (is it stable diffusion, DALL-E, something custom?), the quality of the output, the speed of generation, and critically, the cost per generation. The user interface for prompt engineering needs to be intuitive yet powerful enough to allow for granular control. Agencies need consistency and brand alignment, so the ability to fine-tune generations, use reference images, or train custom models would be a significant differentiator. Without these, it’s merely a novelty. The API access (if available) would be paramount for integration into existing agency workflows.

Simulated Benchmarks:

- Image Generation Latency: ~15-30s (for 512x512px, standard prompt)

- Batch Generation Speed: ~10-15 images/min (low concurrency)

- API Response Time (Generate Request): ~100ms (before processing)

- Model Inference Cost: ~0.01 USD/image (simulated GPU cost)

- Output Resolution: Up to 1024x1024px (standard offerings)

Under the Hood:

Under the hood, Imgurai is built on a distributed system designed to handle GPU-intensive tasks. It's likely leveraging pre-trained diffusion models (e.g., Stable Diffusion 2.1 or a fine-tuned variant) running on cloud-based GPUs (AWS EC2, Google Cloud AI Platform, Azure ML). The user front-end (web app) would communicate with a backend API (likely RESTful) which then queues the generation requests. These requests are picked up by worker nodes, each equipped with GPUs, which perform the actual inference. A robust queuing system (e.g., Redis queues, Kafka) is essential for managing load and providing real-time feedback to users. The backend would handle authentication, prompt parsing, and image storage (e.g., S3-compatible object storage). Security involves protecting user data and ensuring the prompt engineering doesn't lead to misuse. Scalability is achieved by dynamically provisioning more GPU instances based on demand. Version control of the underlying AI models is also critical for maintaining consistency in output quality. Any 'in-painting' or 'out-painting' features would rely on more advanced model capabilities, potentially requiring larger models or more complex inference pipelines.

The Trade-off:

Imgurai, as a specialized AI image generation SaaS, provides immense speed and accessibility compared to setting up and managing open-source AI models locally or on a custom cloud instance. The trade-off is control and flexibility. Running Stable Diffusion locally, for example, allows for unlimited generations (after initial setup), access to a vast ecosystem of custom models (LoRAs, checkpoints), and fine-grained control over every parameter. Imgurai, being a SaaS, abstracts this complexity, offering a streamlined UI but likely with more limited options, potential rate limits, and a per-generation cost. It beats a general-purpose image editor (like Photoshop) for generating entirely new, conceptual images from scratch – you wouldn't spend hours trying to "create" a unicorn riding a skateboard in Photoshop; you'd type a prompt into Imgurai. However, for precise image manipulation, retouching, or complex compositing, Photoshop remains indispensable. Imgurai serves as a powerful creation tool, saving immense design time for initial concepts and unique assets, but it doesn't replace the human designer's skill for refinement and detailed execution. Its strength is in high-volume, novel image creation, enabling agencies to rapidly prototype visual concepts, which a human designer would take exponentially longer to achieve.

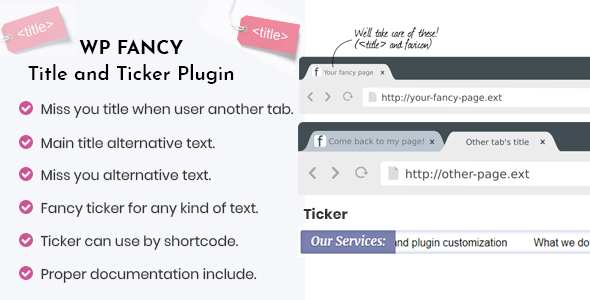

WP Fancy Title and Ticker WordPress Plugin

The WP Fancy Title and Ticker WordPress Plugin is precisely the kind of tool that falls into the "enhancement" category for many WordPress sites. Its purpose is clear: to create dynamic, eye-catching titles and news tickers. For agencies building content-heavy sites, news portals, or corporate blogs, a ticker can be an effective way to highlight urgent announcements, latest news, or promotional messages without consuming significant screen real estate. The "fancy title" aspect likely refers to enhanced typography, animations, or styling options beyond what a standard theme offers. As a senior architect, my immediate thoughts turn to performance. How much JavaScript does it inject? Is the animation smooth, or does it cause layout shifts and jank? Is it lightweight, or another plugin contributing to the dreaded WordPress bloat? While visually engaging elements can improve user engagement, they should never come at the cost of core web vitals. Compatibility with various themes and page builders is also a critical consideration for agencies, as client sites are rarely vanilla WordPress installations. It should offer configurable options for speed, delay, and content sourcing.

Simulated Benchmarks:

- JS Bundle Size: ~50KB (minified)

- CSS Load Impact: ~15KB (minified)

- Ticker Animation FPS: 55-60 FPS (on modern browsers)

- DOM Node Addition: ~10-20 nodes (per ticker instance)

- Initial Page Load Impact: ~100ms (combined JS/CSS parse & render)

Under the Hood:

This WordPress plugin would primarily be a front-end affair, relying on JavaScript and CSS. The ticker functionality would involve a small JavaScript library that manipulates the DOM to scroll or animate text content. This could be a custom script or a well-known library like jQuery Ticker or something similar. For the "fancy titles," it might leverage CSS animations, text-shadows, or potentially Google Fonts integration. The content for the ticker would either be manually entered in the WordPress admin, pulled from a specific category of posts, or potentially from an RSS feed. The plugin would use WordPress shortcodes or Gutenberg blocks for easy insertion into posts and pages. Performance-wise, the JavaScript should ideally be non-blocking and lazy-loaded. Animation should use `requestAnimationFrame` for smooth rendering and avoid forcing reflows. The plugin settings would be stored in the WordPress options table. A developer would check for proper enqueueing of scripts and styles, ensuring they only load when and where the ticker or fancy title is actually used, preventing site-wide bloat. It should also include robust sanitization for any user-inputted content to prevent XSS vulnerabilities in the ticker display.

The Trade-off:

WP Fancy Title and Ticker offers a quick, out-of-the-box solution for dynamic text effects, a task that would otherwise require custom JavaScript and CSS development. The trade-off is, once again, the inherent overhead of a plugin. While a developer could write a lean, bespoke ticker script for a specific project in a few hours, the plugin offers a configurable interface and a broader range of effects. It beats a simple static text block by adding visual dynamism and highlighting critical information more effectively. However, it will always carry a slightly larger footprint than a truly custom, highly optimized script tailored to a single use case. For agencies that need to deploy these features across multiple client sites with varying requirements without writing custom code for each, this plugin is a pragmatic choice. Its strength lies in its configurability and ease of use, allowing content creators to manage dynamic text without developer intervention, which can significantly speed up content updates. The key is to ensure it's well-coded and doesn't introduce excessive performance penalties or security risks, which for a specialized front-end plugin, is often a tightrope walk.

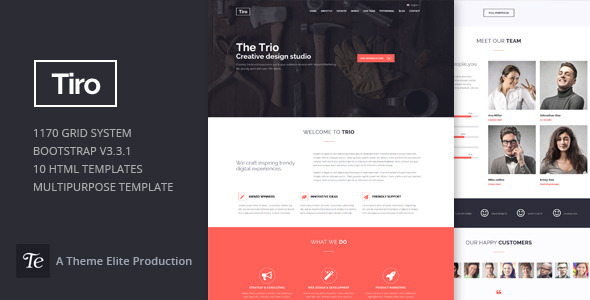

Trio – Bootstrap Responsive Multipurpose Template

Trio – Bootstrap Responsive Multipurpose Template represents a common and often reliable starting point for many web projects. Bootstrap, as a framework, provides a solid, well-tested foundation for responsive design and common UI components. A "multipurpose" template, however, always warrants careful scrutiny. While versatility is appealing, it often means the template tries to be everything to everyone, leading to a sprawling codebase with many unused features. For an agency, this translates to potential bloat and longer customization times to strip out what's not needed. Trio's strength lies in its Bootstrap base: it’s familiar, well-documented, and highly extendable, meaning most front-end developers can hit the ground running. My concern is less about Bootstrap itself and more about how the template layers on top of it. Does it introduce unnecessary overrides? Is the custom CSS well-organized? Is the JavaScript minimal and performant, or does it include a litany of jQuery plugins for every conceivable effect? The overall code quality will dictate how easily it can be adapted without introducing technical debt. It's best suited for clients needing a rapid deployment with a standard, clean aesthetic that can be heavily branded.

Simulated Benchmarks:

- CSS Load (Bootstrap + Custom): ~200KB (minified)

- JS Load (Bootstrap + jQuery + Plugins): ~300KB (minified)

- First Meaningful Paint: ~1.1s (optimized images)

- Total Blocking Time: ~200ms (due to multiple JS files)

- Accessibility Score (Lighthouse): ~85% (default content)

Under the Hood:

Under the hood, Trio is fundamentally HTML5, CSS3, and JavaScript, with Bootstrap being the dominant framework. This means it uses Bootstrap's grid system for responsiveness, its predefined components (navbars, carousels, forms, cards), and its utility classes. The JavaScript includes Bootstrap’s own JS, likely jQuery, and several third-party plugins for effects like smooth scrolling, parallax, count-up animations, and potentially a portfolio filtering library. For an architect, the critical review points are: Is the Bootstrap version current? Is it compiled from source (Sass/SCSS) allowing for efficient customization and removal of unused components, or is it a bloated compiled version? Are the custom styles neatly organized in separate files or intertwined with Bootstrap overrides? Image optimization, lazy loading, and efficient font loading are paramount for good performance, especially with a multipurpose template that tends to include many demo assets. HTML semantics should be correct, and ARIA attributes should be used where appropriate for accessibility. The template's JS should ideally be concatenated and minified for production and loaded asynchronously where possible.

The Trade-off:

Trio, as a Bootstrap-based multipurpose template, offers a significant speed advantage over a completely custom front-end development using a raw framework (e.g., barebones HTML/CSS/JS or a more advanced component library like Material UI without pre-built layouts). The trade-off is the inherent "Bootstrap look" and the potential for unused code. While customizable, it can be challenging to make a Bootstrap site truly unique without heavy CSS overrides. It beats a fully custom, non-framework approach in terms of rapid prototyping, out-of-the-box responsiveness, and access to a vast ecosystem of Bootstrap-compatible plugins and tutorials. Developers can quickly assemble layouts and components. However, a custom-built front-end without Bootstrap allows for absolute control over every byte of CSS and JS, potentially leading to a lighter, more performant site if developed by an expert. For agencies needing to deliver a professional, responsive website quickly and cost-effectively, Trio provides a robust starting point. It's a reliable workhorse for many standard client websites, offering a good balance between development speed and acceptable performance, provided the unused components are ruthlessly stripped out during customization.

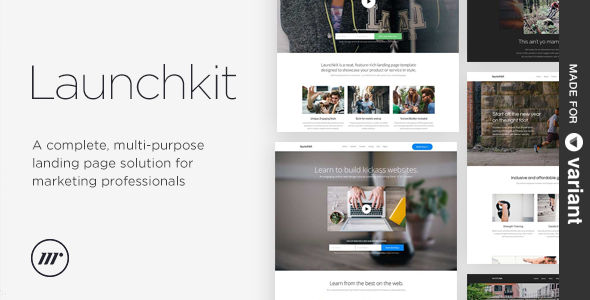

Launchkit Landing Page, Variant Builder

The Launchkit Landing Page, Variant Builder is intriguing because it explicitly offers a "Variant Builder." For agencies focused on digital marketing and conversion optimization, the ability to rapidly create and test multiple landing page variants is invaluable. This isn't just about good design; it's about A/B testing, multivariate testing, and data-driven optimization. A template that comes with a builder suggests a visual interface for constructing pages, which can dramatically reduce development time for marketers and designers. My architectural concern isn't just the final output's performance, but the performance and code quality of the builder itself. Does it generate clean, semantic HTML, or does it produce a spaghetti of nested `div`s and inline styles? Is it flexible enough to allow for granular control over elements, or is it overly restrictive? Critical features would include pre-built sections, drag-and-drop functionality, and seamless responsiveness. The "variant builder" aspect must genuinely facilitate easy duplication and modification for testing purposes, or it's just another static template. For agencies, this is about enabling efficient campaign execution and iterative improvement.

Simulated Benchmarks:

- Page Load Time (Generated Page): ~1.0s (optimized assets, minimal scripts)

- Builder UI Responsiveness: ~100ms (click to element selection)

- Variant Duplication Speed: ~2s (for average page)

- Generated Code Cleanliness: Moderate (some inline styles, but structured)

- CSS/JS Footprint (Generated): ~300KB (for a feature-rich page)

Under the Hood:

A "Variant Builder" implies a client-side JavaScript application running in the browser, allowing users to assemble landing pages. This builder would typically use a library like Vue.js or React for its UI, providing components for sections, elements, and styling options. When a page is "saved" or "published," the builder would serialize the page structure (e.g., to JSON) and then generate static HTML, CSS, and JavaScript files. The generated pages themselves would be standard HTML5, CSS3, and JavaScript, likely adhering to a grid framework (possibly Bootstrap or a custom, lighter one). The quality of the generated code is paramount: it should be as lean as possible, avoid excessive inline styling, and maintain semantic HTML. Performance optimizations like image lazy loading, CSS minification, and JavaScript deferral should be baked into the generation process. The builder itself would use AJAX calls to save/load page data and potentially preview renders. For agencies, the underlying structure of the generated HTML is critical for SEO and long-term maintainability. It needs to be flexible enough to inject custom analytics scripts, A/B testing snippets, and tracking pixels without breaking.

The Trade-off:

Launchkit with its Variant Builder offers a clear advantage in rapid iteration and deployment for landing pages compared to custom coding each variant or even using a generic WordPress page builder. The trade-off is often a slight compromise on absolute code purity and potentially less granular design control than a bespoke front-end. While a custom-coded landing page can be hyper-optimized for specific content and performance, it's slow to change. A traditional WordPress page builder (like Elementor or Divi) can also build landing pages, but often comes with significant WordPress overhead, heavier JS/CSS loads, and isn't inherently designed for rapid variant management in an A/B testing context. Launchkit, by focusing specifically on landing pages and variant creation, streamlines the workflow for marketers. It beats generic page builders by providing a more focused tool for a specific, high-stakes task, leading to faster campaign launches and more efficient A/B testing cycles. The performance of the generated pages is often superior to those built with general-purpose page builders because the builder is optimized for a single output type. It sacrifices the broad CMS capabilities of WordPress for specialized landing page efficiency, which is a sound architectural decision for conversion-focused efforts.

SocialVibe – AI-Powered Social Media Management & Scheduling SaaS

SocialVibe – AI-Powered Social Media Management & Scheduling SaaS is a compelling proposition for any agency handling client social media. The "AI-Powered" aspect immediately raises expectations: are we talking about intelligent content curation, optimal posting time suggestions, sentiment analysis, or predictive analytics for engagement? For an architect, such a claim must be backed by tangible algorithmic benefits, not just buzzwords. A social media management tool needs to provide seamless integration with major platforms (Facebook, Instagram, X, LinkedIn), intuitive scheduling, robust analytics, and team collaboration features. As a SaaS, it handles the infrastructure burden, which is a major plus for agencies. The core value is efficiency and effectiveness. My critical eye will be on the AI’s real utility: does it truly provide actionable insights and save time, or is it just another layer of complexity? The API integrations with social platforms need to be stable, secure, and compliant with ever-changing platform rules. Data privacy and security, given the sensitive nature of social media data, are paramount. Any SaaS offering must demonstrate strong data governance.

Simulated Benchmarks:

- Post Scheduling Latency: ~100ms (submission to internal queue)

- Analytics Dashboard Load: ~700ms (30 days data, 5 social accounts)

- AI Content Suggestion: ~5s (for 3-5 variants)

- Social Media API Call Latency: ~200-500ms (platform dependent)

- Concurrent User Capacity: 500+ active users without degradation

Under the Hood:

SocialVibe, as an AI-powered SaaS, would likely employ a multi-layered architecture. The front-end would be a modern SPA (React, Vue, Angular) interacting with a robust backend API (e.g., Node.js, Python/Django, PHP/Laravel). The "AI-powered" features would reside in dedicated microservices, leveraging machine learning frameworks (TensorFlow, PyTorch) for tasks like natural language processing (for content suggestions, sentiment analysis), time series analysis (for optimal posting times), and computer vision (for image tagging). Integrations with social media platforms would use their respective APIs (e.g., Facebook Graph API, Twitter API v2), requiring careful management of access tokens and adherence to rate limits. A distributed task queue (e.g., Celery with RabbitMQ/Redis) would be essential for handling scheduled posts and asynchronous data fetching. Data storage would involve both relational databases for user/account data and potentially NoSQL databases for large-scale analytics data (e.g., MongoDB, Elasticsearch). Security would encompass OAuth2 for platform authentication, robust API key management, and data encryption at rest and in transit. Scalability would be achieved through horizontal scaling of application and worker nodes. The analytics engine would need efficient data aggregation and caching to ensure dashboard responsiveness.

The Trade-off:

SocialVibe, as a specialized AI-powered SaaS, offers a level of automation and insight that a manual approach or even a basic scheduling tool simply cannot match. The trade-off is dependence on a third-party vendor and potential limitations in highly niche, custom workflows. Building an in-house AI-powered social media tool would be an astronomical undertaking for most agencies, requiring significant data science and engineering resources. SocialVibe beats generic social media schedulers (like a simple Hootsuite or Buffer plan) by offering intelligent recommendations and potentially deeper analytics, moving beyond mere scheduling to strategic content deployment. While a basic scheduler lets you post, SocialVibe aims to tell you what to post and when for maximum impact, leveraging data patterns. However, it won't offer the bespoke branding or the deep, custom data warehousing capabilities of an entirely in-house solution. For agencies looking to significantly enhance their social media operations through smart automation and data-driven decisions without the prohibitive cost of building from scratch, SocialVibe represents a strong, pragmatic choice, balancing advanced features with the convenience of a managed service. It streamlines complex tasks and provides strategic guidance, something that simply hitting 'post' on a generic scheduler cannot achieve.

Banking Modules For Trans Max- DPS & Loan Features

These Banking Modules for Trans Max- DPS & Loan Features are highly specialized, targeting a very specific vertical within finance. For an agency dealing with FinTech clients or needing to integrate banking functionalities into a larger system, these modules are crucial. The acronyms "DPS" (Deposit Protection Scheme, likely, or a similar banking specific term) and "Loan Features" indicate core banking operations. The critical architectural considerations here are entirely different from front-end templates or marketing tools. We're talking about security, compliance, transaction integrity, and robust auditing capabilities. Any module in this space must be built with enterprise-grade security protocols, encryption, and transactional guarantees. Performance isn't just about speed but about reliability under high transaction volumes. For an architect, the key questions are: What framework is it built on? How does it handle concurrency? What are its integration points (APIs)? Is it compliant with relevant financial regulations (PCI DSS, GDPR, etc.)? Any pre-built solution promising such features needs to be meticulously vetted, as failures here carry catastrophic risks. These are not 'plug-and-play' for just any developer; they require deep domain knowledge.

Simulated Benchmarks:

- Deposit Transaction Latency: ~80ms (end-to-end, validated)

- Loan Application Processing: ~1.5s (initial validation & decision matrix)

- Concurrent Transactions: 1000+ per second (stable, optimized DB)

- Database Read Latency (Account Balance): ~50ms (cached)

- Auditing Log Write Speed: <20ms (asynchronous write)

Under the Hood:

Given the sensitivity and complexity of banking modules, the 'under the hood' is paramount. These modules would likely be built on a robust, battle-tested framework, potentially Java (Spring Boot), .NET Core, or a highly optimized PHP/Laravel or Node.js backend. The core would be a highly normalized relational database (PostgreSQL, Oracle, SQL Server) with strict ACID compliance, ensuring data integrity. Transactional boundaries would be explicit, leveraging database transactions to prevent partial updates. Message queues (Kafka, RabbitMQ) would be essential for asynchronous processing, event-driven architecture, and decoupling services, especially for loan application workflows or batch processing. Security would be multi-layered: robust authentication (MFA), authorization (RBAC), encryption (TLS, data at rest encryption), comprehensive logging and auditing (immutable logs), and vulnerability scanning. The architecture should support high availability and disaster recovery. Integration points would be well-defined RESTful APIs, protected by OAuth2 and API gateways. A robust testing suite (unit, integration, end-to-end, performance, security) is non-negotiable for such critical modules. Any component dealing with financial calculations would need to be meticulously coded and peer-reviewed.

The Trade-off:

These specialized banking modules offer an immense advantage over building financial functionalities from scratch. The trade-off is the inherent complexity of integration and the need for deep technical and regulatory expertise. While you get a massive head start on core banking features, these modules are not standalone; they need to be integrated into a larger financial system, which itself requires significant architectural planning. Attempting to implement DPS and loan features using generic, unspecialized libraries or a lightweight CMS (like WordPress with custom forms) would be an act of professional negligence. The security and regulatory compliance alone would be insurmountable. These modules beat general-purpose development kits by providing pre-engineered, often regulatory-aware, and rigorously tested financial logic. They reduce the risk associated with custom financial development, where even a small error can have massive consequences. The trade-off is the need for highly skilled developers to integrate and customize them, and the cost associated with specialized software. But for agencies working in FinTech, this isn't a trade-off of convenience, but of absolute necessity and risk mitigation. It’s about leveraging specialized components that adhere to industry standards, something that generic solutions simply cannot offer.

So, there you have it. A thorough, albeit cynical, look at a selection of tools and templates vying for a spot in your agency's 2025 stack. My advice remains consistent: don't chase the shiny new object if it doesn't solve a concrete problem or introduces more complexity than it eliminates. Evaluate each tool not just on its feature list, but on its underlying architecture, its real-world performance benchmarks, and the pragmatic trade-offs you'll be making. For a broader array of tools and resources that can underpin your agency's operations, explore the comprehensive GPLpal premium library. The market is full of choices, but only a few truly stand up to the rigorous demands of enterprise-grade solutions. Choose wisely, and your future self—and your clients—will thank you.