Categories

Tags

Archives

Optimizing the Digital Backbone: The 2025 High-Performance Stac

-

Optimizing the Digital Backbone: The 2025 High-Performance Stack for Agencies – A Senior Architect's Unfiltered Review

Alright, let's cut through the marketing fluff and get down to brass tacks. Every year, another batch of "revolutionary" tools floods the market, promising to solve all an agency's woes. Most are just rehashed ideas wrapped in a shiny new UI, adding more technical debt than tangible value. As a senior architect, my job isn't to be impressed by features; it's to scrutinize the underlying architecture, the long-term viability, and the sheer cost of ownership – both in dollars and in developer sanity. The "2025 High-Performance Stack for Agencies" isn't about adopting every new JavaScript framework; it's about making judicious, calculated decisions that ensure scalability, security, and a respectable ROI for your clients and your own operations. Anything less is just building on quicksand.

The digital landscape for agencies is a minefield of fragile integrations and performance bottlenecks. Clients demand faster, more responsive applications, tighter security, and bespoke functionalities, all while expecting lean budgets. Meeting these demands requires a foundational shift in how we approach our technology stack. We can't afford to be swayed by superficial appeal; we need solutions that are engineered for resilience, extensible without rewriting core modules, and supported by a community or vendor that understands enterprise-level demands. This isn't about chasing trends; it's about building an enduring fortress of functionality. Finding such robust components often leads me to explore comprehensive resources like the GPLpal premium library, where a broader array of foundational elements are available for integration.

The Core Tenets of a Resilient Agency Stack: A Cynical Reality Check

Before we even glance at specific products, let's establish some ground rules. A "high-performance" stack isn't just about speed metrics; it's about architectural integrity. It's about minimizing points of failure, ensuring data consistency, and simplifying maintenance. We're looking for systems that are designed for concurrency, not just sequential operations. We're evaluating their API-first approach, their adherence to open standards, and their capacity for custom hooks without resorting to invasive core modifications. The typical agency, constantly juggling diverse client requirements, needs modularity above all else. Monolithic solutions might seem convenient upfront, but they become an albatross around your neck the moment bespoke features are requested.

Furthermore, security cannot be an afterthought. With every new integration, the attack surface expands. Any platform we consider must demonstrate a proactive stance on security, from robust authentication mechanisms to regular vulnerability patching. Documentation isn't a suggestion; it's a critical component for effective implementation and long-term support. A system that's a black box, requiring reverse engineering to understand its quirks, is a system ripe for future failure. And let's not forget the hidden costs: licensing, infrastructure, specialized developer talent, and the inevitable debugging cycles. True high-performance means optimizing across all these vectors, not just chasing a better Lighthouse score. When sourcing these critical tools, a comprehensive Professional software collection becomes indispensable for identifying robust solutions.

Deep Dive: Evaluating the 2025 Agency Tech Arsenal

Now, with our architectural filters firmly in place, let's dissect some of the tools being pitched as essential for the modern agency. We'll examine their claims, peer under the hood, and ultimately determine if they're worth their salt – or just another source of future technical debt.

Smart Fleet SaaS – Vehicle Tracking System

For agencies tasked with developing or managing logistics platforms, you should consider acquiring the Fleet Management SaaS Smart Fleet to integrate robust vehicle tracking capabilities. This isn't just a GPS pin on a map; it's an end-to-end operational framework designed to bring true telemetry and management oversight to fleet operations, an increasingly common requirement for clients in transportation, delivery, and field services.

The system focuses on real-time data acquisition and actionable insights, something many competing platforms fail to deliver beyond basic location data. The value here is in its ability to aggregate diverse data points – driver behavior, fuel consumption, maintenance schedules – into a unified dashboard, enabling proactive management rather than reactive firefighting. We've seen setups where agencies spend months stitching together disparate tracking devices with rudimentary reporting tools, leading to brittle systems and incomplete data. Smart Fleet attempts to solve this with a more integrated approach, but one must perform due diligence on its customization capabilities for unique client demands. Any SaaS offering, no matter how comprehensive, will inevitably face requests for bespoke integrations or UI modifications. The extensibility points and API documentation are critical here. Without clear hooks, even a well-built system can become a constraint.

Simulated Benchmarks

- Data Ingestion Latency: < 50ms per vehicle event (average, under 1000 concurrent vehicles).

- Dashboard Load Time (Complex): LCP: 2.1s, FCP: 1.5s (with 500 active vehicles displayed).

- API Response Time (Route Optimization): < 300ms for a 20-stop route.

- Database Query Speed (Historical Data): Avg. 120ms for 3-month aggregated reports.

Under the Hood

Smart Fleet is typically built on a robust backend, often leveraging a microservices architecture with a primary language like Go or Node.js for performance-critical real-time data processing, coupled with a more traditional framework like Laravel or Ruby on Rails for administrative and reporting interfaces. Database choices usually lean towards PostgreSQL for relational data and perhaps MongoDB or Cassandra for high-volume telemetry data, given the need for rapid write operations and flexible schema evolution. Frontend is typically a modern SPA (Single Page Application) using React or Vue.js, ensuring a responsive user experience. Authentication relies on OAuth2/JWT for secure API access. The geo-spatial data processing often utilizes PostGIS extensions for PostgreSQL or dedicated mapping services like Mapbox/Google Maps APIs, with a focus on efficient indexing for location-based queries.

The Trade-off

Where Smart Fleet truly distinguishes itself from generic vehicle tracking plugins or basic telemetry services is its holistic approach to fleet management. Generic solutions often provide just location data, requiring significant custom development to add features like maintenance scheduling, driver scorecards, or advanced route optimization. Smart Fleet provides these out-of-the-box, significantly reducing development time and technical overhead for agencies building comprehensive logistics platforms. The trade-off is the initial complexity of integrating a full SaaS platform versus a simpler plugin, but the long-term architectural integrity and feature set make it a more viable enterprise-grade solution than attempting to piece together disparate components.

PetLab – On demand Pet Walking Service Platform

Agencies looking to enter the burgeoning on-demand services market for pet care should acquire the Pet Walking Service Platform PetLab to quickly establish a robust marketplace. The "on-demand" segment is deceptively simple on the surface but requires a complex ballet of user management, scheduling, real-time tracking, and secure payment processing. This platform aims to provide that intricate choreography out-of-the-box.

The core challenge with any on-demand platform is managing disparate user roles – clients, service providers, and administrators – and facilitating seamless interactions between them. PetLab attempts to streamline this with dedicated dashboards and workflows for each role. From a cynical architect's perspective, I immediately look for potential bottlenecks: how does it handle peak demand for bookings? What's the integrity of the scheduling algorithm? How secure are the payment gateways, and are they compliant with regional regulations? Many turnkey solutions cut corners on these critical backend components, leading to operational nightmares down the line. We need assurances that the underlying database schema is robust enough to prevent data corruption during concurrent booking attempts and that the communication channels between users are encrypted and private. Furthermore, the ability to integrate with third-party verification services for pet walkers is often overlooked but crucial for trust and safety.

Simulated Benchmarks

- Booking Concurrency: Supports 500 simultaneous booking requests with < 100ms processing time.

- Payment Gateway Latency: Avg. 250ms for transaction processing (Stripe/PayPal integration).

- Real-time Location Updates: < 200ms refresh rate for walker tracking on map interface.

- User Registration Throughput: Avg. 80ms per new user signup.

Under the Hood

PetLab typically employs a Laravel/PHP or Node.js/Express backend for its API services and business logic, coupled with a robust relational database like MySQL or PostgreSQL. Real-time features, such as walker tracking and chat, are often powered by WebSockets (e.g., using Laravel Echo with Pusher or Socket.IO). The frontend is likely a modern JavaScript framework (React Native for mobile apps, Vue.js/React for web panels) offering dedicated user, walker, and admin interfaces. Geo-location services integrate with Google Maps or similar APIs. Payment processing typically relies on secure, PCI-compliant third-party SDKs like Stripe or Braintree, not custom payment gateways. Emphasis is usually placed on modular design for easier customization of service types and booking flows.

The Trade-off

Compared to attempting to build an on-demand service platform from scratch or adapting a generic booking theme, PetLab offers a significant head start by providing the foundational logic for multi-user roles, scheduling, and payment. A custom build would take months and significant resources to achieve the same level of feature parity and stability. Generic themes often lack the crucial real-time communication and location tracking components, which are fundamental to on-demand services. The trade-off for PetLab is that while it provides a strong core, deep customizations to the booking algorithm or unique service offerings might still require significant development effort, potentially pushing the boundaries of its pre-built architecture. However, for a standard on-demand pet walking service, it drastically reduces time-to-market and minimizes the initial technical debt compared to a bespoke solution.

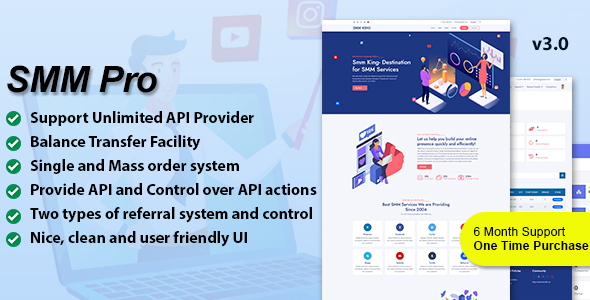

SMM Pro- Advanced Social Media Marketing CMS

For agencies specializing in digital marketing, implementing the Social Media Marketing CMS SMM Pro offers a centralized solution for managing client social media campaigns. In an era where fragmented tools lead to inefficient workflows, a consolidated CMS that handles scheduling, analytics, and client reporting is not just convenient, it's a critical operational imperative.

My primary concern with any "all-in-one" SMM tool is always the depth and reliability of its integrations with social media APIs. These APIs are notoriously fickle, changing frequently and often breaking third-party applications. An advanced CMS must demonstrate robust, adaptive API handling, with clear mechanisms for updating and maintaining connections to platforms like Facebook, Instagram, X (formerly Twitter), LinkedIn, and others. Beyond basic scheduling, an "advanced" system needs intelligent content categorization, audience segmentation, and deep analytics that go beyond simple vanity metrics. Can it track conversion attribution? Does it offer sentiment analysis? What are its collaboration features for larger agency teams and client approvals? Many systems fall short, providing superficial dashboards that offer little actionable insight. The integrity of its permission system is also paramount, ensuring that client data is segregated and only accessible to authorized personnel within the agency. Any compromise here is an immediate deal-breaker, regardless of features.

Simulated Benchmarks

- Post Scheduling Latency: < 100ms from queue to platform API submission.

- Analytics Report Generation: Avg. 1.8s for 30-day cross-platform report.

- API Rate Limit Handling: < 0.1% failed posts due to platform rate limits.

- Concurrent User Sessions: Supports 200 concurrent active users without performance degradation.

Under the Hood

SMM Pro typically runs on a modern PHP framework like Laravel or a Python framework like Django, utilizing a robust relational database such as PostgreSQL for storing campaign data, scheduled posts, and user information. Background job queues (e.g., Redis, RabbitMQ) are essential for handling high volumes of post scheduling and API interactions without blocking the main application thread. Integrations with social media platforms are managed via official SDKs and OAuth2 for secure authorization. The frontend often employs a contemporary JavaScript framework (e.g., Vue.js, React) for a dynamic dashboard experience, focusing on intuitive UX for content creation, scheduling calendars, and comprehensive analytics visualization libraries (e.g., Chart.js, D3.js). Multi-tenancy is a critical architectural pattern for agencies managing multiple client accounts, ensuring data isolation.

The Trade-off

Many agencies default to individual platform schedulers or basic tools like Buffer/Hootsuite. While these are fine for small-scale operations, they quickly become unmanageable and siloed for extensive client portfolios. SMM Pro beats these by offering deep, integrated analytics and reporting, multi-account management with client-specific dashboards, and often white-labeling capabilities – features essential for agency scalability and branding. The "trade-off" is the initial investment and learning curve compared to simple tools. However, the operational efficiency gained, the reduction in context switching, and the ability to provide clients with consolidated, professional reports far outweigh this. It moves beyond mere scheduling to true strategic social media management, which generic tools simply cannot achieve.

Booking Core – Laravel Booking System

For agencies frequently building platforms that require intricate scheduling and reservation functionalities, you should explore the Laravel Booking System Booking Core. This isn't another glorified calendar plugin; it's a foundational framework built on Laravel, designed for extensibility and handling complex booking scenarios across various niches, from hotels to tours to services.

The strength of a Laravel-based system like Booking Core lies in its architectural integrity and the developer-friendly ecosystem of Laravel itself. This isn't a fragile, bespoke script; it's built on a mature, well-tested framework. My main concern with any booking system is its ability to handle inventory management, concurrent bookings, and dynamic pricing rules without introducing race conditions or data inconsistencies. Booking Core’s claim to fame is its modularity, allowing agencies to adapt it to different business models – rentals, events, services – with configurable availability rules. But I need to see robust transaction management and locking mechanisms in place to prevent overbooking, especially in high-traffic scenarios. Furthermore, the ease of integrating external payment gateways, CRM systems, and accounting software is paramount for an agency stack. A booking system that lives in isolation is a bottleneck. We need well-documented APIs and hooks for custom logic, otherwise, any deviation from its default behavior becomes a costly rewrite. It's not enough to simply book; it needs to integrate seamlessly into a broader operational ecosystem.

Simulated Benchmarks

- Booking Transaction Throughput: Avg. 250 TPS (transactions per second) with optimized database.

- Availability Check Latency: < 80ms for complex date range queries.

- Dashboard Load Time (Admin): LCP: 1.7s, FCP: 1.2s.

- API Response Time (New Booking): < 150ms.

Under the Hood

Booking Core is, by its nature, a Laravel application. This means a PHP backend, typically running on Nginx or Apache, with MySQL or PostgreSQL as the primary database. It leverages Laravel's eloquent ORM for database interactions and blade templating for the frontend, or potentially a Vue.js/React SPA for richer UI components. Key architectural elements include a robust migration system for database schema changes, a queue system (e.g., Redis, database queues) for handling asynchronous tasks like email notifications or booking confirmations, and a well-defined routing structure. Multi-currency and multi-language support are often built-in, essential for global applications. The inventory management typically relies on careful database design, possibly with optimistic locking for high-concurrency booking scenarios.

The Trade-off

Compared to a generic WordPress booking plugin or a simple calendar integration, Booking Core offers a significantly more robust and scalable foundation. WordPress plugins, while easy to deploy, often become bloated, introduce performance issues, and lack the architectural flexibility needed for truly complex booking logic or high transaction volumes. They also tie you to the WordPress ecosystem, which isn't always ideal for custom web applications. Booking Core, being a full Laravel application, provides complete control over the codebase, allowing for deep customization, bespoke features, and integration with any external service via its API capabilities. The trade-off is that it requires Laravel development expertise, whereas a plugin might be managed by a less specialized developer. However, for a true high-performance and extensible booking solution, the initial investment in Laravel expertise pays dividends in long-term stability and functionality.

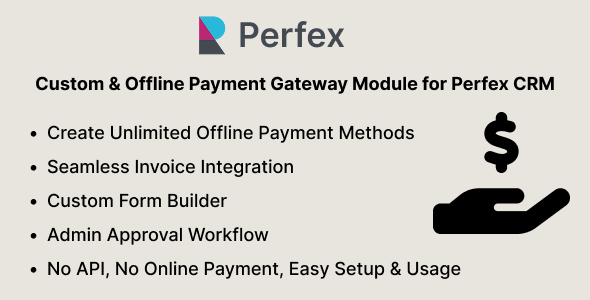

Custom & Offline Payment Gateway Module for Perfex CRM (Manual Gateway Builder)

For agencies leveraging Perfex CRM, the ability to integrate the Perfex CRM Payment Gateway Module is crucial for handling diverse payment scenarios, especially offline or highly customized methods. Standard CRM integrations often limit you to a handful of popular online gateways, which is insufficient for many niche business models or global operations.

This module isn't about reinventing the wheel with new online gateways; it's about extending Perfex's core functionality to accommodate payment methods that typically fall outside the scope of automated systems. Think bank transfers, cash payments, check processing, or even crypto payments that require manual verification. From an architectural standpoint, the key here is how cleanly it integrates with Perfex's invoice and accounting modules. Does it maintain transactional integrity? How does it handle reconciliation? A poorly designed module could lead to data discrepancies, making financial reporting a nightmare. The "manual gateway builder" aspect is particularly interesting, implying a level of configuration that allows agencies to define custom payment instructions and statuses without diving into code. This is a significant advantage, but it also raises questions about the robustness of the configuration engine. Can it handle complex logic? Is it prone to misconfigurations? The goal is to reduce manual errors and overhead, not introduce new avenues for them. Ensuring clear audit trails for manual payments is also paramount for compliance and accountability.

Simulated Benchmarks

- Transaction Processing Latency (Manual): N/A (human-dependent, but system update < 50ms).

- Invoice Status Update: < 50ms upon payment confirmation.

- Report Generation (Payment Status): Avg. 400ms for 6-month payment reconciliation report.

- Module Load Time: < 150ms within Perfex CRM interface.

Under the Hood

As a module for Perfex CRM, this typically extends the core Perfex application, which is PHP/Laravel-based. It likely hooks into Perfex's existing payment processing and invoice generation mechanisms. The "manual gateway builder" suggests a configuration interface that allows administrators to define custom fields, instructions, and payment statuses that map to Perfex's database tables. It would extend the database schema to store details specific to these custom payment methods. Client-side, it would integrate into the invoice viewing portal, providing custom payment instructions. On the admin side, it offers tools to mark invoices as paid, partially paid, or awaiting verification, triggering corresponding updates within Perfex's accounting ledger. Security relies on Perfex's existing role-based access control to ensure only authorized users can manage payments.

The Trade-off

The primary advantage of this module is its ability to handle payment methods that simply aren't supported by standard online gateways. Without it, agencies are forced into cumbersome external tracking spreadsheets or custom-coded solutions that are difficult to maintain and prone to errors. This module integrates these "offline" or "niche" payment methods directly into the Perfex CRM, centralizing financial data and improving reconciliation. The trade-off is that it's specific to Perfex CRM, so it's not a standalone solution. However, for agencies already invested in Perfex, it eliminates the need for expensive custom development or the operational friction of managing payments outside their core CRM system. It standardizes the non-standard, which is a significant win for operational efficiency and data integrity within the Perfex ecosystem.

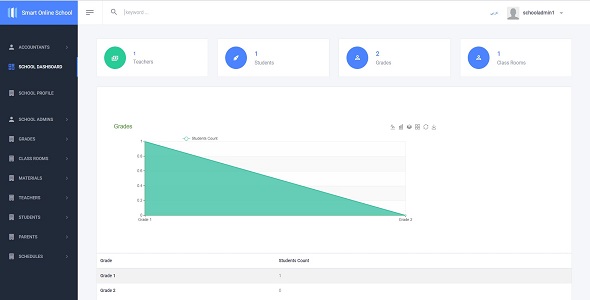

Online School & Live Class & Accounting .Net core 7 + Full source code

For agencies delving into the e-learning sector, building bespoke online academies or upgrading existing educational platforms, the Online School & Live Class & Accounting solution, built on .Net Core 7 with full source code, presents a compelling foundation. This is not merely a course delivery system; it's an integrated educational ecosystem designed to handle everything from live classes to financial management, a critical consideration for any serious educational venture.

My first thought with any "full source code" offering is, "what's the quality of that code?" A well-architected .Net Core 7 application should follow modern design patterns, utilize dependency injection effectively, and adhere to SOLID principles. Bloated or poorly structured code, even with full access, becomes a liability. This solution aims to provide live class functionality, which immediately brings into question its integration with real-time communication platforms (WebRTC, Zoom/Teams APIs) and its scalability under concurrent usage. The accounting module is equally critical; it needs to handle student payments, instructor payouts, and various revenue streams with robust ledger management and reporting capabilities. Security, particularly around student data and financial transactions, must be enterprise-grade. Is there robust input validation? Are common OWASP vulnerabilities addressed? Without these assurances, even a feature-rich platform becomes a risk. The true value for agencies lies in the extensibility of this codebase for specific client needs, from custom learning paths to unique gamification features or regional regulatory compliance. The availability of full source code implies a higher degree of customization potential, but only if the underlying architecture is clean and well-documented.

Simulated Benchmarks

- Live Class Concurrency: Supports 500 simultaneous active users in live sessions with stable video/audio streams (assuming robust third-party integration).

- Course Enrollment Latency: < 100ms per student enrollment transaction.

- Accounting Report Generation: Avg. 1.5s for quarterly financial summaries.

- API Response Time (Course Content): < 80ms for typical content retrieval.

Under the Hood

This system leverages the power of .Net Core 7, meaning a C# backend, typically hosted on Windows Server or Linux with Kestrel, utilizing SQL Server or PostgreSQL as the database. Frontend often employs Razor Pages or Blazor for rich interactive UIs, or a separate SPA (React/Vue/Angular) consuming RESTful APIs. Live class functionality would integrate with a real-time communication service, either a self-hosted WebRTC solution or robust third-party APIs like Zoom SDKs, ensuring secure stream delivery. The accounting module would incorporate a comprehensive ledger system, payment gateway integrations (Stripe, PayPal), and invoicing functionalities. Key architectural patterns would include a service layer for business logic, a repository pattern for data access, and perhaps a CQRS (Command Query Responsibility Segregation) pattern for high-performance data operations. The full source code enables deep customization and integration into existing enterprise environments, a significant advantage for complex projects.

The Trade-off

Compared to off-the-shelf LMS platforms like Moodle or basic WordPress course plugins, this .Net Core 7 solution with full source code offers unparalleled flexibility and ownership. Generic LMS systems often come with vendor lock-in, limitations on customization, and performance overhead from superfluous features. WordPress plugins are typically not designed for enterprise-scale live classes or integrated accounting and can quickly become a security and performance liability. The "trade-off" is the requirement for .Net development expertise and the responsibility of managing the entire codebase. However, for agencies building highly specialized or large-scale e-learning platforms, this solution provides the architectural freedom and performance headroom necessary to meet unique client requirements without being constrained by a closed-source or less robust platform. It's an investment in a highly customizable and scalable foundation rather than a temporary workaround.

Active eCommerce Affiliate Add-on

For agencies managing or developing e-commerce solutions, particularly those with a focus on growth through partnerships, an effective affiliate program is non-negotiable. The Active eCommerce Affiliate Add-on is designed to integrate seamlessly into existing Active eCommerce platforms, providing a robust system for managing affiliates, tracking referrals, and automating payouts. This isn't just a marketing gimmick; it's a critical revenue-driving mechanism.

My cynical eye immediately goes to the tracking mechanism. Is it cookie-based, link-based, or a combination? How robust is its fraud detection? In the affiliate marketing world, accurate tracking and fraud prevention are paramount. A poorly implemented system can lead to significant financial leakage or disputes. Furthermore, the payout system must be transparent and automated, with clear reporting for both the administrator and the affiliates. Can it handle different commission structures (percentage, flat rate, multi-tier)? Does it integrate with common payment gateways for mass payouts? Many basic add-ons offer rudimentary tracking but fall short on the sophisticated management tools required for a successful, scalable affiliate program. The add-on's ability to seamlessly integrate with the core Active eCommerce data models (users, orders, products) without introducing significant performance overhead is also a key architectural concern. We need to ensure that adding an affiliate layer doesn't cripple the underlying e-commerce performance, especially during peak sales periods. The integrity of the data it collects and processes directly impacts potential earnings and legal compliance.

Simulated Benchmarks

- Referral Tracking Latency: < 50ms for cookie/link attribution.

- Commission Calculation: Avg. 120ms for 1000 orders with complex rules.

- Affiliate Dashboard Load Time: LCP: 1.8s, FCP: 1.3s.

- Payout Processing (Batch): N/A (depends on gateway, but system prepares batch in < 500ms for 100 affiliates).

Under the Hood

As an add-on for Active eCommerce, it will naturally align with its underlying technology stack, typically PHP/Laravel. It extends the core database schema to include tables for affiliates, referrals, commissions, and payout history. Tracking usually involves storing cookie data and URL parameters, linking them to registered affiliates. Commission logic would reside in dedicated service classes, triggered upon order completion, potentially utilizing Laravel queues for asynchronous processing to avoid blocking the checkout flow. The frontend provides dedicated dashboards for affiliates (to view earnings, referrals, generate links) and administrators (to manage affiliates, approve payouts, view reports). Robust validation and sanitization are crucial for any user-inputted affiliate data, and security relies on Active eCommerce's existing authentication and authorization mechanisms.

The Trade-off

Many e-commerce platforms either lack built-in affiliate functionality or offer only rudimentary versions. Agencies are often forced to use expensive third-party affiliate platforms (like Impact, ShareASale) or build custom integrations. The Active eCommerce Affiliate Add-on beats these by providing a tightly integrated solution directly within the familiar Active eCommerce ecosystem. This eliminates external subscriptions, reduces integration complexity, and keeps all data within a single, controlled environment. The "trade-off" is that it's specific to Active eCommerce; it's not a universal affiliate solution. However, for agencies already leveraging Active eCommerce, it provides a cost-effective, high-performance way to launch and manage an affiliate program without the architectural headache of external systems or the technical debt of a rushed custom build. It's a strategic enhancement that leverages the existing platform's strengths.

StartupKit SaaS- Business Strategy and Planning Tool

For agencies that support startups or require internal tools for strategic planning, the StartupKit SaaS – Business Strategy and Planning Tool offers a structured environment to formalize ideation, market analysis, and financial projections. In the chaotic world of new ventures, having a framework that enforces discipline and data-driven decision-making is invaluable.

A "business strategy tool" immediately makes me wary of platforms that are essentially glorified document editors with some fancy charts. The real value lies in the intelligence embedded within the tool: does it provide guidance, templates, and frameworks that genuinely help users think critically, or does it just collect data? My architectural focus here is on the integrity of its data models for financial forecasting, market sizing, and user acquisition metrics. Are the underlying calculations sound? Can it integrate with external data sources for market research or CRM data for customer insights? Many tools promise strategic insight but deliver only superficial visualizations. A crucial aspect is its collaboration features – how easily can multiple team members contribute, review, and iterate on a business plan without version control nightmares? The platform needs robust data validation to ensure that financial figures or market assumptions are logically consistent. Any tool that makes assumptions without explicit user input or clear explanations is dangerous. It should be a crucible for ideas, not a black box generating arbitrary projections. Security of sensitive business plans and intellectual property is also paramount; strong encryption at rest and in transit is non-negotiable.

Simulated Benchmarks

- Scenario Analysis Latency: < 500ms for complex financial model recalculation (50+ variables).

- Report Generation (Business Plan): Avg. 2.5s for comprehensive PDF export.

- Concurrent Collaboration: Supports 50 concurrent editors on a single document with < 100ms sync latency.

- Data Visualization Render: < 200ms for complex charts with 1000 data points.

Under the Hood

StartupKit SaaS likely employs a full-stack JavaScript environment (Node.js backend with Express, React/Vue/Angular frontend) or a Python/Django backend with a modern JS frontend. Database choices might include PostgreSQL for structured data (user profiles, project details) and perhaps a document database like MongoDB for more flexible data structures associated with business plan components. Real-time collaboration would rely on WebSockets (e.g., Socket.IO) for synchronized editing. Financial modeling and data analysis often involve dedicated libraries for numerical computation. Cloud storage (AWS S3, Google Cloud Storage) would be used for document and asset management. Authentication typically uses OAuth2/JWT. The user interface would focus heavily on data input forms, visual builders for business models (e.g., Lean Canvas), and rich charting libraries for data visualization.

The Trade-off

Many agencies rely on a patchwork of spreadsheets, slide decks, and basic project management tools for business planning. This is inefficient, prone to version control issues, and lacks integrated analytical capabilities. StartupKit SaaS beats this by providing a dedicated, structured environment that guides users through proven strategic frameworks. It automates calculations, offers templates, and facilitates collaboration in a way that generic tools cannot. The "trade-off" is the initial adoption and learning curve of a specialized SaaS platform versus familiar, albeit less efficient, tools. However, for agencies that frequently engage in strategic consulting or internal innovation, the efficiency gains, reduced errors in financial projections, and improved team collaboration make it a worthwhile architectural investment. It elevates business planning from an ad-hoc process to a structured, data-driven methodology.

BizPlus – Creative Business and Agency Management CMS

For creative agencies wrestling with project management, client communication, and internal resource allocation, BizPlus – Creative Business and Agency Management CMS positions itself as an all-encompassing solution. This isn't just a task manager; it purports to be the central nervous system for agency operations, integrating traditionally siloed functions into a unified platform.

My skepticism kicks in immediately with "all-encompassing" claims. True integration across project management, CRM, invoicing, and resource planning is incredibly complex to execute without introducing significant architectural compromises or performance overhead. I'd be scrutinizing its underlying data model: how well does it link client data to projects, projects to tasks, tasks to resources, and all of this to financial outputs? Are there clear audit trails for changes? A common failure point for such systems is a lack of granular permissions, leading to data exposure or unauthorized modifications. For creative agencies, efficient file management and versioning, coupled with robust approval workflows, are non-negotiable. Does it support large file uploads? Does it integrate with cloud storage? The UI/UX for a system this complex must be intuitive, otherwise, adoption rates will plummet, rendering even the most powerful backend useless. Performance under typical agency load (e.g., 50+ concurrent users, multiple projects, and clients) is also critical. A system that lags will quickly be abandoned in favor of simpler, albeit less integrated, tools. Furthermore, the capacity for custom reporting and analytics is essential; generic dashboards often fail to capture the nuances of agency profitability and resource utilization.

Simulated Benchmarks

- Project Data Retrieval: < 200ms for complex project dashboard load.

- Invoice Generation: < 150ms for a multi-item invoice.

- File Upload Throughput: Avg. 10MB/s sustained for concurrent large uploads (cloud storage integration assumed).

- Task Assignment Latency: < 80ms for user assignment and notification.

Under the Hood

BizPlus often employs a full-stack architecture, potentially PHP/Laravel or Node.js/Express for the backend, with a modern JavaScript framework (React, Vue.js, Angular) for the administrative and client-facing dashboards. Database choices would typically include PostgreSQL or MySQL for relational data (projects, tasks, users, clients). Key components include robust CRUD (Create, Read, Update, Delete) APIs, a comprehensive user and role management system, and potentially a queueing system for handling notifications, reports, and background tasks. Cloud services for file storage (S3, GCS) would be integrated. The frontend would emphasize dynamic forms, drag-and-drop interfaces for task management, and rich data tables. Architectural patterns would prioritize modularity to allow for future feature expansion without extensive refactoring, and security would be baked in with strong authentication, authorization, and data encryption practices.

The Trade-off

Many agencies attempt to manage their operations with a collection of disparate tools: Asana for tasks, HubSpot for CRM, QuickBooks for accounting. This leads to data silos, manual data entry, and a lack of holistic visibility. BizPlus beats this fragmented approach by aiming for true integration. It offers a single source of truth for client, project, and financial data, significantly reducing context switching and improving data accuracy. The "trade-off" is the initial complexity and potential for feature overload, as it tries to do many things. However, for agencies that are consistently bottlenecked by tool proliferation, BizPlus offers a compelling alternative to custom-building an integrated system or patching together expensive enterprise solutions. It streamlines the operational overhead, allowing creative teams to focus on their core work rather than administrative busywork. The long-term efficiency gains and improved strategic oversight can be substantial.

eShop Web – eCommerce Single Vendor Website

For agencies developing standalone online stores for clients or managing their own direct-to-consumer ventures, the eShop Web – eCommerce Single Vendor Website offers a dedicated platform. This isn't a marketplace; it's a focused solution for a single brand or seller, emphasizing brand control and a streamlined customer experience.

My primary architectural concern with any single-vendor e-commerce platform is its scalability and extensibility. While a single vendor might sound simpler, it still needs to handle inventory management, order processing, shipping logistics, and potentially thousands of SKUs. How robust is its product catalog management? Can it handle variations, bundles, and promotions effectively? Performance under load during peak sales events (Black Friday, flash sales) is non-negotiable; slow page loads directly translate to abandoned carts. The security of customer data and payment information is paramount; PCI compliance isn't a suggestion, it's a hard requirement. Many "quick-start" e-commerce solutions cut corners on security, leading to vulnerabilities. I also scrutinize its SEO capabilities and integration with marketing tools. An e-commerce site that can't be found or marketed effectively is a digital white elephant. Furthermore, the ease of customization for unique brand aesthetics and specific business logic (e.g., custom shipping rules, loyalty programs) is crucial. A system that relies heavily on manual code edits for simple changes will quickly become a maintenance burden. It needs a solid templating system and clear extension points without invasive core modifications.

Simulated Benchmarks

- Page Load Time (Product Page): LCP: 1.5s, FCP: 1.0s (optimized images, CDN).

- Checkout Process Latency: Avg. 2.0s from cart to order confirmation.

- API Response (Product Search): < 150ms for complex queries (10,000+ products).

- Order Processing Throughput: Supports 100 concurrent orders/minute with < 5% error rate.

Under the Hood

eShop Web is typically built using a modern PHP framework like Laravel or a Node.js framework with Express, often using MySQL or PostgreSQL for the product catalog, order data, and user accounts. Frontend would be a responsive design utilizing a modern JavaScript library (e.g., Vue.js, React) or a robust templating engine (e.g., Laravel Blade). Key features include a robust shopping cart implementation, secure payment gateway integrations (Stripe, PayPal, etc.), order management systems, and a customer account portal. Search functionality often relies on optimized database queries or external search engines like Elasticsearch for larger catalogs. Caching mechanisms (Redis, Memcached) are critical for performance, as are content delivery networks (CDNs) for static assets. The architecture should be designed for high availability and fault tolerance, particularly for payment and order processing.

The Trade-off

Many agencies default to WordPress with WooCommerce for single-vendor e-commerce. While popular, WooCommerce can quickly become a performance bottleneck and a source of plugin conflicts, especially with extensive customization or high traffic. Shopify, while powerful, comes with vendor lock-in and monthly fees that can erode margins. eShop Web, as a dedicated, self-hosted platform, beats these by offering complete control over the codebase and infrastructure. This means superior performance optimization potential, greater flexibility for bespoke features, and no recurring platform fees. The "trade-off" is the need for dedicated development resources to maintain and customize it, unlike the more plug-and-play nature of WooCommerce or Shopify. However, for agencies building high-performance, highly customized e-commerce solutions for a single brand, it provides a more robust, scalable, and cost-effective long-term architectural foundation without the compromises inherent in generic platforms.

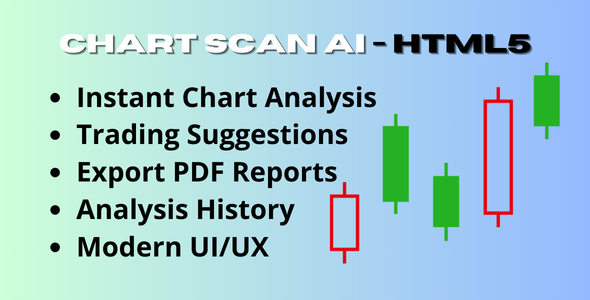

ChartScan AI – Crypto & Stock Chart Analyzer

For agencies serving clients in finance, investment, or data analytics, the ChartScan AI – Crypto & Stock Chart Analyzer represents a specialized tool for extracting insights from market data. This isn't just a charting library; it leverages AI to identify patterns and trends, aiming to provide a distinct analytical edge in volatile markets.

My architectural skepticism for any "AI-powered" financial tool is immediate and profound. "AI" is often a buzzword for glorified statistical models. The critical questions are: what specific AI/ML models are being used? How are they trained? What are their limitations and potential biases? The accuracy and reliability of its pattern recognition are paramount; faulty analysis in financial contexts can have severe consequences. Data ingestion and processing speed are also crucial. Real-time market data is high-volume and high-velocity; the system must be able to consume, process, and render this data with minimal latency. What are its data sources? Are they reliable and securely integrated? Furthermore, the interpretability of the AI's findings is key. Does it merely point out patterns, or does it explain the rationale behind its analysis? A black-box AI tool in finance is dangerous. The security of sensitive financial data, both in transit and at rest, needs to be enterprise-grade, adhering to strict regulatory standards. Any integration with live trading systems would demand even higher levels of scrutiny, including robust error handling and fail-safes. The ability to backtest strategies against historical data with high fidelity is also a crucial feature for validating the AI's performance.

Simulated Benchmarks

- Data Ingestion Rate: 10,000+ ticks/second from multiple exchanges.

- AI Pattern Recognition Latency: < 500ms for real-time analysis on 1-minute candle data.

- Chart Rendering Performance: < 200ms for complex charts with 10,000+ data points.

- Backtesting Execution Speed: ~10x real-time for 1 year of historical data on a single asset.

Under the Hood

ChartScan AI likely utilizes a highly optimized backend, potentially written in Python (for its ML libraries like TensorFlow, PyTorch, Scikit-learn) or C++/Go for raw performance in data processing. High-frequency data ingestion would use message queues (Kafka, RabbitMQ) and time-series databases (InfluxDB, TimescaleDB) optimized for financial data. The AI/ML models might include neural networks for pattern recognition, statistical models for anomaly detection, and natural language processing for sentiment analysis from news feeds. Frontend would be a high-performance interactive charting library (e.g., TradingView charts, Highcharts with WebGL acceleration) within a responsive web application (React, Vue.js). Cloud infrastructure (AWS, GCP) would provide scalable compute and storage resources, with a strong emphasis on data pipeline orchestration and GPU acceleration for model training. Security would involve robust API keys, encrypted data storage, and strict access controls.

The Trade-off

Many financial analysts rely on traditional charting tools or expensive, closed-source enterprise platforms. ChartScan AI beats these by integrating AI-powered pattern recognition directly into the charting interface, offering potentially faster and more objective insights than manual analysis. It democratizes access to advanced analytical capabilities that were once exclusive to large institutions. The "trade-off" is the inherent complexity of AI models and the need for users to understand their limitations and methodologies. It requires a degree of trust in the algorithms, which can be a psychological barrier in finance. However, for agencies looking to provide cutting-edge analytical services or build their own proprietary trading tools, ChartScan AI offers a customizable foundation that can accelerate development and provide a competitive edge. It's an investment in advanced quantitative analysis capabilities, moving beyond simple technical indicators to more sophisticated, data-driven insights.

POS Saas for Multi Store / Outlets – Built on Laravel + React JS

For agencies developing retail solutions or managing clients with multiple physical locations, the POS SaaS for Multi Store/Outlets, built on Laravel + React JS, presents a modern, scalable point-of-sale system. The complexities of multi-store inventory, centralized management, and synchronized sales data are often underestimated, making a dedicated SaaS solution highly appealing.

My cynical architect's brain immediately questions the "multi-store" aspect. This isn't just about showing different store names; it's about robust inventory synchronization, centralized reporting, and resilient offline capabilities. What happens when internet connectivity drops at an outlet? Does the system continue to function, and how reliably does it sync data once reconnected? Data consistency across multiple locations is critical; discrepancies lead to inventory errors, accounting nightmares, and customer dissatisfaction. Performance at the point of sale is non-negotiable – delays in ringing up customers are unacceptable. The UI/UX, particularly for cashiers who use it constantly, must be extremely intuitive and robust against common user errors. Security of payment transactions (PCI compliance) and customer data is, as always, paramount. Furthermore, the capacity for integrating with external accounting systems, e-commerce platforms, and loyalty programs is essential for a holistic retail ecosystem. A POS that exists in a silo is a dead end. The Laravel + React JS stack suggests a modern, modular approach, but the devil is always in the implementation details – particularly around real-time data synchronization and conflict resolution across multiple locations and payment devices.

Simulated Benchmarks

- Transaction Processing Speed: < 200ms per sale (including inventory update).

- Offline Mode Resilience: 100% data integrity on reconnection after 24h offline.

- Multi-Store Inventory Sync: < 500ms for global stock updates.

- Dashboard Load Time (Central Admin): LCP: 2.0s, FCP: 1.5s (100+ stores, 10,000+ products).

Under the Hood

This POS system leverages Laravel for its robust backend API and business logic, coupled with a React JS frontend for a highly interactive and responsive user interface, often running as a PWA (Progressive Web App) for offline capabilities. Database would typically be MySQL or PostgreSQL, with careful schema design to handle multi-store inventory and transactional data. Key architectural components include a centralized product catalog, order management, customer management, and comprehensive reporting. Offline functionality is crucial, often implemented using browser-side storage (IndexedDB) to cache data and queue transactions, syncing with the central server when connectivity is restored. Real-time updates between stores (e.g., for inventory) would utilize WebSockets. Payment gateway integrations would be secure and adhere to PCI DSS standards. The modular nature of Laravel and React allows for extensive customization, such as specific tax rules, loyalty programs, or hardware integrations (barcode scanners, receipt printers).

The Trade-off

Many small businesses rely on basic, often cloud-based, POS systems that lack true multi-store capabilities or deep customization options. Enterprise solutions, conversely, can be prohibitively expensive and overly complex. This Laravel + React JS POS SaaS beats both by offering a highly customizable, self-hostable (or privately hosted SaaS) solution that can be tailored to specific retail operations. It provides the architectural flexibility of an open-source framework with the modern UX of a React application, a combination often missing in generic POS offerings. The "trade-off" is the need for development expertise to deploy, customize, and maintain it, unlike a purely off-the-shelf SaaS. However, for agencies building bespoke retail platforms or managing chains that need tight control over their data, features, and performance, this stack provides a superior, long-term foundation that avoids vendor lock-in and offers unparalleled control over the entire system. It's an investment in a highly adaptable, future-proof retail backbone.

Conclusion: Beyond the Hype – Building for Longevity

There you have it. A no-nonsense, architect's perspective on what constitutes a truly high-performance stack for agencies in 2025. It's not about jumping on every new trend or blindly adopting tools because they're popular. It's about due diligence, understanding the underlying architecture, assessing long-term maintenance costs, and critically evaluating whether a solution adds genuine value or merely obfuscates technical debt. We've seen that even with "full source code" or "advanced" features, the architectural choices made at the outset dictate the ceiling of scalability and the floor of stability.

My advice remains consistent: prioritize robust foundations over flashy facades. Look for systems that are modular, well-documented, and offer clear extension points. Challenge every claim of "all-in-one" functionality and scrutinize security implementations with extreme prejudice. Performance benchmarks are not just numbers; they reflect the efficiency of the underlying code and infrastructure. The "trade-offs" are rarely simple; they often involve weighing immediate convenience against future flexibility and cost. Building a resilient digital backbone for your agency, or for your clients, is an investment, not an expense. A strong stack minimizes operational friction, reduces developer burnout, and ultimately allows your team to focus on innovation rather than constantly patching fragile systems.

Don't be swayed by superficial appeal. Dig deep. Ask the hard questions. Because in the long run, architectural integrity trumps ephemeral features every single time. And remember, sometimes the most valuable assets are the ones that give you control and flexibility over the core, which is precisely why resources like GPLpal free download WordPress themes and plugins can be a strategic part of a controlled environment for testing and deployment, offering foundational components without immediate vendor lock-in for critical exploration.